Ensuring data translates into meaningful information is what matters at the end of the day, as ultimately information is what is used to make decisions by the businesses. To make things further interesting, businesses prefer flexibility and options in the way information is generated and used. While data forms the heart and soul of all businesses, many IT shops do not quite have a vision of what the data architecture should look like to really meet the business demand for futuristic flexible information generating system.

In this article, I will provide you with key ingredients for a blueprint that will allow IT shops to setup architectures for such systems. This blueprint is totally flexible and can be adopted at a pace which is palatable to the business.

Think of the blueprint in terms of following acronym: SAS times 4 i.e. SAS * 4:

SAS 1

- Sources:

- The architecture should account for being able to consume data from internal systems and be able to integrate external data. An example of internal system would be an ERP system. An example of external data would be a Twitter feed. The consumption can come in many forms including but not limited to Enterprise Service Bus, APIs and manual imports. The key is to make sure you have given thought regarding what sources are important to your business.

- Approval:

- A workflow tool is a great way to integrate sources of data by approving it into a staging area. This approval process can be automatic, based on certain thresholds or even run straight through. Your architecture should certainly have such a facility. This will allow you to create better quality of data into authorized sources of data.

- Structure:

- A robust futuristic data store should allow for structured, semi-structured and unstructured data. Examples of structured data is typical relational database management systems like Oracle and SQL Server. Semi-structured data can take many forms such as a messaging bus, XML, JSON and CSV. Unstructured data usually are in the form of MS excel spreadsheets or MS word documents. Most IT organizations struggle from having a good technical solution that allow for all structures of data being stored and integrated in their architecture. This is one of the most important part of the architecture and should be carefully thought through.

SAS 2

- Stage:

- If you want to keep your data architecture flexible than you must rendezvous various sources into a staging area before moving the data into a central storage(s). APIs, Enterprise Service Bus or ETL tools are some of the ways to move data around into a staging area.

- Access:

- You must design a variety of ways to access the data wherever possible. The access mechanism will depend on who the users are and what they will be using the data for. For example, developers will likely need ability to trace the data to debug complex issues that may arise in a production environment. End users may be interested in some canned reports. Power users may be interested in accessing the data for advanced analytics purposes. Your architecture should be open enough to allow for various ways of accessing the data such as manual, APIs, and automatic extraction.

- Security:

- Securing your data will always be a requirement, now or in the future. This means that you must consider ways to encrypt your data while it is at rest (in storage or databases) or in transit (within or outside of your network). Further, role-based access of data will always be minimal set of requirements that you must consider from day one.

SAS 3

- Standards:

- Modern data architectures require data to be transformed between relational form and semi-structured JSON (or similar) constructs. Your architecture should allow for such standards to be implemented so you are able to deconstruct and reconstruct from one form to another. This usually means implementing some sort of master ID management system within an event-based messaging services architecture. This means you should hire a data architect who has coding skills or at least strong understand of modern microservices. In addition, you must also have standard table stakes processes in place as it relates to data governance, data stewardship data taxonomy and data definition.

- Algorithms:

- Power users love data. In addition to easy access to the data, power users manipulate the data by using advanced analytics platform to predict various business-related key performance indicators such as sales for the next quarter or even stock prices. Your architecture should allow hooks and APIs for easy integration with platforms that allow for advanced analytics facilities such as Machine Learning and AI.

- Skills:

-

- Your architecture is as good as your team. If you want a flexible architecture that can easily accommodate your growing business then you must do a skill analysis of your resources such as Architects, DBAs, Integrators and Business Stewards.

SAS 4

- Scale:

- Non–functional requirements (NFRs) are often overlooked. Good data architecture(s) will consider tools and solutions that will be flexible as time passes without rewriting or reimplementing new technologies. Scalability is one such NFR that is very important and must be implemented in a way that allows for changes in volume of data over time. This can be accomplished by architects, DBAs, dev-ops engineers and software engineers if given enough thought.

- Automation:

- Do not make automation of Dev-Ops processes an afterthought in your data architecture implementation. This will allow for consistent and repeatable environments that can be helpful in many ways such as easy access, backup, disaster recovery and scale.

- Store:

- Selection of database technologies is one of the most confusing tasks for the IT shops. Where and how you store your data will depend on many factors such as size, growth, structure and business purpose. Your architecture should select these database(s) such that there is minimum need of replacing them in the future.

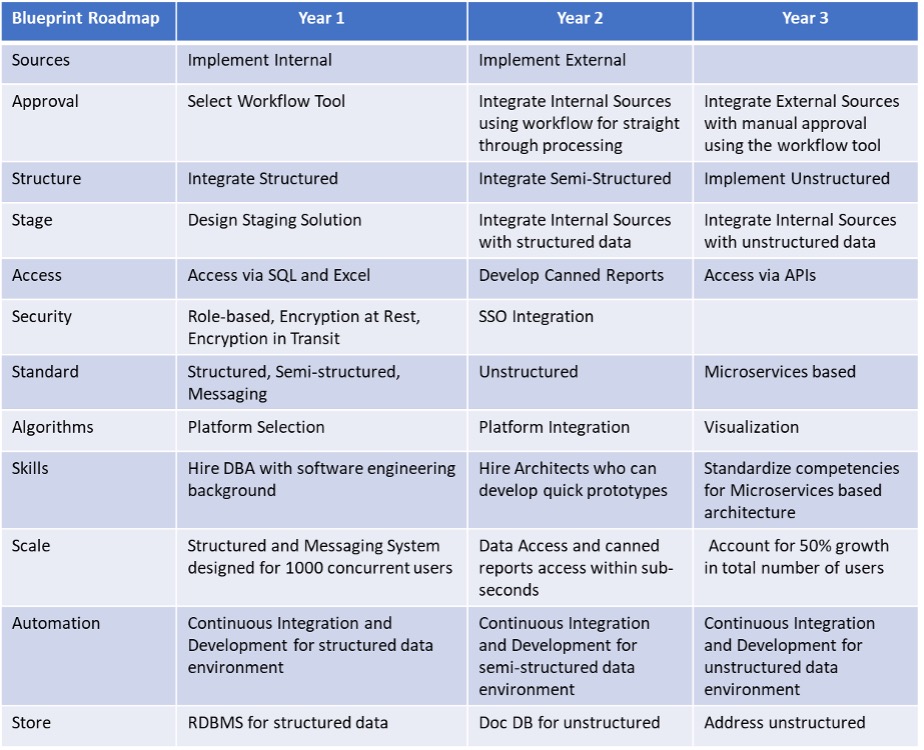

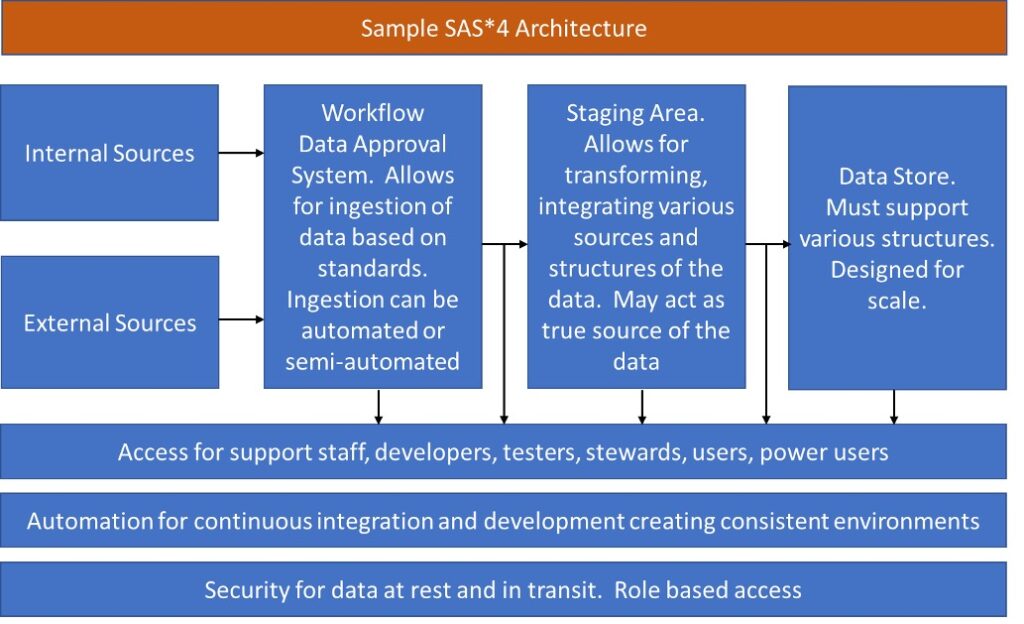

The flexibility in the SAS * 4 blueprint model comes from the right combination of each of these ingredients. The main message here is that your architecture should account for these key ingredients. You can create a roadmap or path for each of these key ingredients and reach your end goal. Figure 1 provides a sample roadmap for SAS * 4 model. Figure 2 provides a sample architecture.

Figure 1.0: Sample Roadmap using SAS * 4 Model

Figure 2.0: Sample Architecture. The lines represent enterprise integration systems like APIs, ETL etc.

Alok Mehta, Ph.D., Independent Technology Consultant

Alok Mehta is a veteran technology leader in the financial services space. Mr. Mehta served as the firm’s Chief Technology Officer between 2015-2019. Mr. Mehta was responsible for overseeing the strategic implementation of technology and information systems for the firm. Prior to joining GCM Grosvenor, Mr. Mehta was the Chief Technology Officer at Continental Assurance Company, where he directed all aspects of technology strategy and infrastructure from 2012 to 2015. Mr. Mehta had similar responsibilities as Chief Architect at Allstate Financial Insurance Company from 2007 to 2012. From 2005 to 2007, Mr. Mehta was a Senior Vice President at Insurance Technologies. Mr. Mehta began his career at American Financial Systems as a Senior Consultant from 1990 to 1995 and later became the Chief Technology Officer of the firm from 1995 to 2005. Mr. Mehta received his Bachelor of Science from the University of Rajasthan in Physics and Mathematics in 1987, his Bachelor of Business Studies from Southern New Hampshire University in Finance in 1988, his Master of Business Administration from Plymouth State University in Computer Information Systems in 1990, his Master of Science from Northeastern University in Computer Engineering in 1993 and his Doctor in Software Engineering at Worchester Polytechnic Institute in 2003.